Seriously?

It is hardly surprising that with a corporate mindset, people are hired on their ability to follow instructions and protocols rather than their own initiative. However when I actually thought about the current state of mobile technologies, it is evident that with so many different technologies and terminologies floating around, it is simply not meant for easy and painless comprehension.

Say, Vodafone NZ is operating concurrent networks, 2G GSM EDGE at 900MHz and 1800MHz, as well as a W-CDMA based, HSDPA capable 3G UMTS on 900MHz and 2100...Well I should probably stop here.

Much of the confusion had been a result of bureaucratic red-tape, political rivalry, human greed and outright stupidity. Hence this new post series is going to strip these terms to be basics in an attempt to explain them.

Depending on the context, these information could matter a lot or a little to the end user. For example, iPhone owners may find themselves with no 3G coverage while their friends with a $99 Nokia phone gets 3G practically anywhere. Nevertheless if the only activities on their mobile are plain calling and text messages, it is hardly an issue.

Before we head into the confusing world of mobile telecommunication, let us look at the earlier iterations if the copper-based phone system.

Early telephone services are nothing but a simple mesh network of interconnected phones, with your phone physically linked to all your friend's homes. Using a bit simple math, we can soon work out that the number of wires required for n users are n(n-1)/2. The situation soon imploded as every possible wiring space is filled with cables, something has to be done.

I had to use a less impressive modern example because I cannot find the file photo; for now just imagine the same tangle 10 times bigger.

Then some genius came up with the idea of telephone exchange, which uses human power to physically connect calls by forming circuits.

The Nutt sisters were the first female telephone operators bought in to replace teenage boys with poor manners; well you cannot expect good manners from a teenager on minimum wage, women are more willing to submit; need proof?

Despite the technologies of phone exchange advanced greatly over the last century, with mechanical then electronic means instead of maiden's hands, the "hub and spoke" model survived largely intact in many other forms of networks beyond voice and data.

---------------------------------------------------------------------------

As an oversimplified rule of thumb, high frequency means shorter distances of transmission and higher bandwidth. A few examples:

Long wave travels by the contours of the earth, hence they require very high masts. Transmission can be picked up from 3000km away on a good day.

Junglinster long wavestation

Short wave, on the other hand, gets around by getting reflected between the ground and the ionising layer of the atomsphere. Hence they have a range in tens of thousands of kilometers and remains the standard frequency for international broadcasts in this digital age.

Radio Canada's short wave towers

Microwave transmission usually travel by the line of sight, hence they have limited range usually less than 50km. Nevertheless it is of excellent spectrum density and less affected by weather.

Your average rooftop microwave mast, very likely to be a cellphone mast as well; note the drum-like relay antenna and the triangular panel antenna

With higher frequency, range is even more limited and absorption by rain and other obstacles become problematic. However they can be made into good applications such as short range remote controls.

Infrared is invisible to human eyes, however most digital cameras will notice

Hence, there is no accident that mobile phones uses a small section of microwave frequency known as UHF, which offers good range as well as the ability to carry multiple calls from one station.

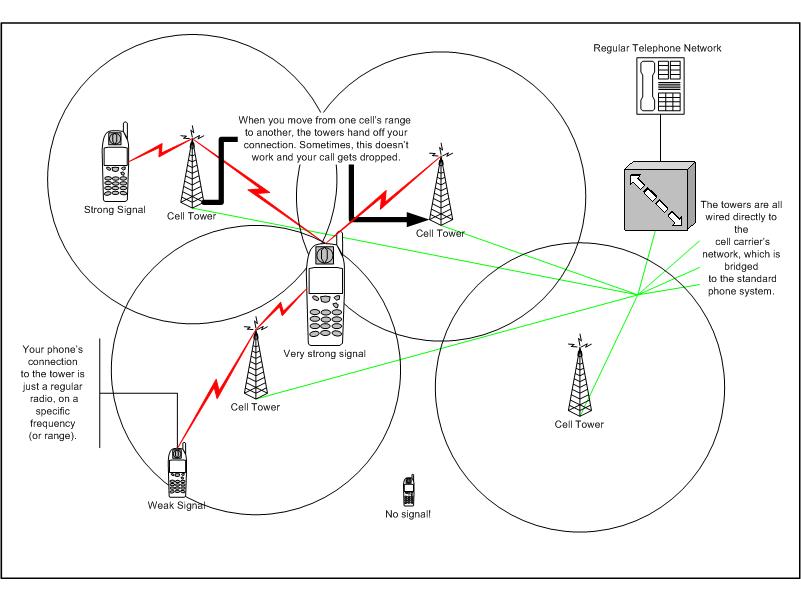

Hence, there is no accident that mobile phones uses a small section of microwave frequency known as UHF, which offers good range as well as the ability to carry multiple calls from one station. Earlier iteration of radio phones are nothing more than small radio transceivers connected to the phone exchange system, with many radio masts known as base stations providing service to one area known as a cell and maintaining connection to each phone in small channels of allocated frequency. Calls are handed over to another base station once the user travels into the a different area because the same channel could be used by another device and the late comer has to be allocated a different channel.

All was well when cellular phone are few and powerful such as car phones and large handsets about the size of a hock of ham. Coverage was excellent as one major base station can cover a large radius. For example, Telecom used to have one base station on top of the sky tower for the entire central Auckland, nowadays the same area is served by hundreds to thousands of masts yet call quality is hardly better than what it used to be.

The main reason behind the evolution is that as the number of users increased, existing stations ran out of capacity for calls and had to be divided into smaller cells, and apply some tedious math to make sure there is enough channels to go around.

Conversely, the smaller cells require less power which leads to the further miniaturisation of cell phones; the first generation of true handsets were born.

From left: Motorola Droid(made in 2009), Dr Martin Cooper(born in 1928), DynaTAC prototype (first used in 1973)

Clearly, more measures are needed to be able to fit more users into the finite space of radio frequencies. Digital transmission algorithms are used to compress voice signal into smaller channels, and calls are co-modulated to utilise transmission efficiency as much as possible according to the rule of physics; however the number of calls that can be stuffed into one wavelength is still limited.This is known as Frequency-Division Multiplexing or FDM

A cunning way to get around the issue is called Time-Division Mutiplexing, where each phone is allowed a time slot in the same frequency, maximising use of the same channel. The competing standard is know as Code-Division Multiplexing. Without going too far into the technicalities, imagine FDM attempt at dividing a large hall into tiny cubicles so the occupants will not speak over the voice of each other, TDM as the same hall full of people taking turns to speak; while with CDM everybody talks at the same time, albeit in a different dialect so to avoid confusion. Most current technologies uses one of the methods or a combination of two or more.

For the mathematically minded